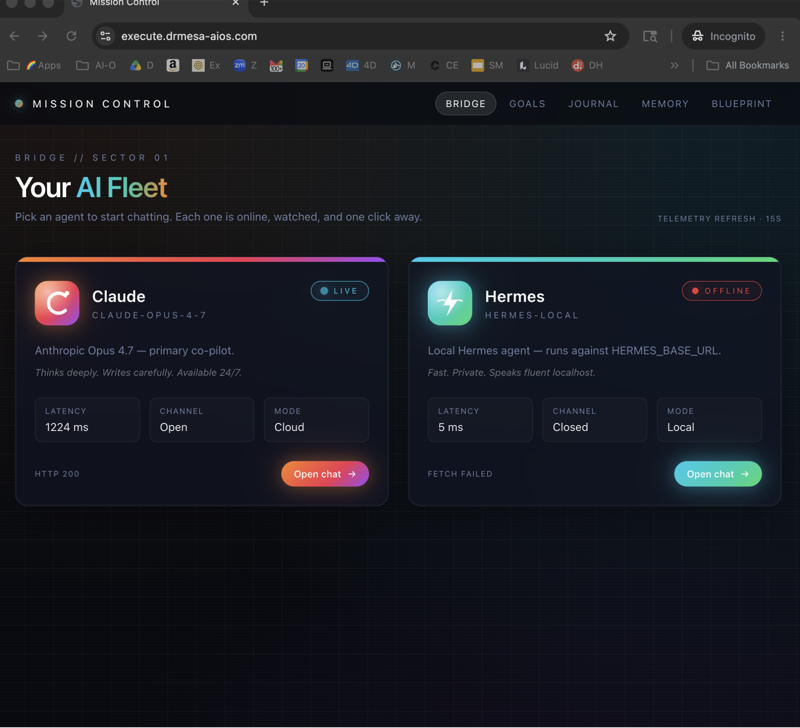

Ollama + Hermes has officially replaced OpenClaw as my daily-driver AI agent setup — and I want to walk you through exactly why.

I've been testing AI agents for months.

OpenClaw, Hermes, paperclip setups, custom configurations — I've tried it all.

Here's my honest take after running both in production:

OpenClaw is powerful, but it's bloody buggy.

Hermes is smoother, faster, and just... works.

And with Ollama's brand new one-click Hermes launch feature, the setup is genuinely the easiest I've ever seen.

Video notes + links to the tools 👉

Why Ollama + Hermes Just Became the Best AI Agent Setup

Before we get into the setup, let me explain why this combo is genuinely special.

Ollama Gives You Model Freedom

Ollama is an app that lets you run AI models on your own machine.

Both free local models (like Gemma 4 and Qwen) and cloud models with generous free tiers (GLM, Minimax, Kimmy).

Hermes Gives You an Actual Agent

Hermes isn't just a chatbot.

It's an autonomous AI agent that lives in your terminal, can connect to Telegram, and can run actual work for you.

Research, coding, social media automation, content creation — Hermes handles it.

The Combo Is Free and One-Click

Before this update, setting up Hermes was technical.

Now?

One command: ollama launch hermes.

That's the whole setup.

The Brutal Truth About OpenClaw vs Hermes

Let me just say it.

I've been vocal about this on my YouTube channel.

OpenClaw is powerful but buggy.

I've had OpenClaw setups break mid-session.

I've had it fail to use tools it should have access to.

I've had configuration issues that took hours to resolve.

Hermes doesn't do any of that.

It's smoother.

It uses tools better.

It maintains context more reliably.

And it's genuinely agentic — meaning it actually takes initiative rather than just responding to prompts.

When Hermes Wins

- You want reliability over maximum capability

- You're non-technical and want a quick setup

- You need something that "just works"

- You want Telegram integration

When OpenClaw Still Wins

- You're a developer who loves customisation

- You need specific integrations Hermes doesn't have

- You want the absolute cutting edge of features

For 99% of my work, Hermes wins.

Get a FREE AI Course + Community + 1,000 AI Agents 👉

Setting Up Ollama + Hermes (The Actual Commands)

Right, enough theory.

Here's the exact setup.

Install Ollama First

Go to ollama.com and grab the latest version.

If you've got an older version installed, update it.

New models drop all the time, and the latest Ollama handles them better.

Open Terminal and Run One Command

ollama launch hermes

That's it.

Ollama will spin up Hermes and ask you which model you want to use.

Pick Your Model

You'll see a list of recommended options:

| Model | Type | Speed | Recommendation |

|---|---|---|---|

| GLM 5.1 Cloud | Cloud | Fast | ⭐ Best starting point |

| Minimax M2.7 Cloud | Cloud | Fast | ⭐ Best for tool use |

| Kimmy K2.5 Cloud | Cloud | Fast | Great for reasoning |

| Qwen 3.5 Cloud | Cloud | Fast | Strong all-rounder |

| Gemma 4 | Local | Slow | Only if you want 100% free |

Cloud models are free up to usage limits.

Local models are completely free but slower.

Hit enter on your choice and Hermes launches.

You now have a working AI agent.

🔥 Want the complete 2-hour Hermes training course?

Inside the AI Profit Boardroom, I've built a full Hermes classroom with the one-click Ollama setup, Telegram connection walkthrough, custom skill creation, and real automation use cases. Plus a 6-hour OpenClaw course if you want to master both. 3,000+ members inside using these exact setups. Jump on weekly coaching calls and I'll help you configure YOUR workflow live.

My Real-World Ollama + Hermes Workflow

Let me show you how I'm actually using this.

I run Hermes via Ollama on my Mac Studio.

It's connected to Telegram, so I can message my AI agent from my phone.

I've got custom skills set up for:

- Social media automation — schedule posts, draft replies, manage content

- Research tasks — pull data from URLs, summarise findings, compile reports

- Content workflows — draft blog posts, create outlines, generate ideas

- Admin tasks — scheduling, email drafting, task management

When I switched from OpenClaw to Hermes via Ollama, my setup transferred seamlessly.

All my skills still work.

All my memory is intact.

The experience is just noticeably smoother.

The Speed Test: Cloud vs Local Models

This is important.

I tested both cloud and local models with Ollama + Hermes.

Here's what I found:

Gemma 4 (Local)

Took over a minute to respond to a simple "are you working?" message.

On a Mac Studio with Apple M4 Max.

That's not fast.

Gemma 4 is designed for mobile devices, so it's lightweight but slow on desktop.

GLM 5.1 Cloud

Responded in seconds.

Handled complex queries smoothly.

Maintained context across long sessions.

Minimax M2.7 Cloud

Best tool use I've seen in this price range.

Very agentic — takes initiative instead of just responding.

My go-to for serious automation work.

Verdict: Unless you absolutely need 100% free, stick with cloud models.

Switching Models Mid-Workflow

One of my favourite things about Ollama + Hermes.

You can swap models on the fly.

Here's how:

- Press

Control + Cto end your current Hermes session - Run

ollama launch hermesagain - Pick a different model

- Everything resumes — skills, memory, configuration all intact

Why would you want to do this?

- Testing — compare how different models handle your workflows

- Cost management — switch to free local models for simple tasks

- Quality boost — switch to cloud models for complex reasoning

- Specific capabilities — some models are better at certain tasks

The flexibility is brilliant.

Learn how I make these videos 👉

Why Telegram Integration Changes Everything

Here's something I didn't appreciate until I set it up properly.

Hermes + Telegram is a killer combo.

Once you've got Hermes running via Ollama on your Mac, you can connect it to Telegram.

Then you can:

- Message your AI agent from your phone wherever you are

- Trigger automations remotely without being at your computer

- Get notifications and updates through Telegram channels

- Chat with your AI like a personal assistant during commutes or travel

I was worried that switching to Ollama would break my Telegram setup.

It didn't.

My Hermes on Telegram works with the new Ollama model provider exactly the same as before.

That's seamless integration at its best.

The Free Forever Setup

Want to run this completely free forever?

Here's the blueprint:

- Install Ollama — free

- Download a local model (Gemma 4 or similar) — free

- Run

ollama launch hermeswith your local model — free - Use it for basic tasks where speed doesn't matter

You'll trade speed for cost, but you'll have a working AI agent that never charges you a penny.

For serious work though, I'd recommend using cloud models up to their free limits.

You get way more capability and the free tier is usually enough.

🔥 Running Hermes or OpenClaw but not getting real results? Let's fix that.

Inside the AI Profit Boardroom, I share the exact automation playbooks I use with Ollama + Hermes — lead generation workflows, content automation systems, SEO pipelines, and client outreach agents. Real use cases, not theory. 3,000+ members are building AI-powered businesses with this stuff. Jump on weekly coaching calls and we'll review YOUR setup live.

Common Questions From the Community

Here are the questions I get most often about Ollama + Hermes:

"Is paperclip + Hermes + Opus 4.7 the best agentic setup?"

For most people, no — it's overkill.

A basic setup with Hermes and a decent API like Minimax or GLM 5.1 is more than enough.

Don't over-engineer.

"What's the difference between Ollama + Hermes vs OpenClaw?"

OpenClaw is more technical, takes longer to set up, and has more customisation.

Ollama + Hermes is simpler, faster to launch, and less buggy.

Choose based on your technical level and what you actually need.

"Are there free models on OpenRouter for Hermes?"

Yes — Elephant Alpha is a solid free option.

It's being used by Hermes, Claude Code, and OpenClaw users.

Fast and surprisingly capable for a free model.

"Should I use Turbo Quant for faster local models?"

If you're running models through Hugging Face, yes — it can speed them up significantly.

With Ollama specifically, you don't really do that.

Stick to the cloud models for speed with Ollama.

Ollama + Hermes: Frequently Asked Questions

What's the difference between Ollama + Hermes and just using Hermes alone?

Ollama provides the model infrastructure — it's what serves the AI model that Hermes uses as its brain. Without a model provider, Hermes can't do anything. Ollama's new one-click launch makes connecting these two incredibly simple.

Can I use Ollama + Hermes on Windows?

Ollama supports Windows, Mac, and Linux. The ollama launch hermes command should work across all platforms, though the experience is smoothest on Mac in my testing.

How do I switch models after setting up Ollama + Hermes?

Press Control + C to end your current Hermes session, then run ollama launch hermes again. You'll be able to select a different model while keeping all your existing skills and memory intact.

Is Ollama + Hermes safe to use?

Generally yes, but don't give it access to anything you're uncomfortable with. If you're not sure about an API or permission, don't grant it. Start small, learn how the system works, then expand capabilities as you get comfortable.

Why is my local model with Ollama so slow?

Local models run on your hardware, so speed depends on your machine. Even on a Mac Studio with M4 Max, smaller local models like Gemma 4 can take 30-60 seconds to respond. Cloud models are significantly faster and free up to usage limits.

Can Hermes automate social media through Ollama?

Yes — Hermes has skills for social media automation built in. Connect it to your accounts, set up automation workflows, and let it run. It's one of the most common use cases I see in the community.

Ollama + Hermes is the AI agent setup I wish I'd had six months ago — and if you're still fighting with OpenClaw configurations, it's time to try Ollama + Hermes.