If you're still paying a designer in 2026, this chatgpt image 2 tutorial is going to sting a bit.

I'm not trying to be dramatic.

I'm just telling you what I actually saw when I ran ChatGPT Image 2 against every major image model on the market.

It ended the competition.

In this post I'll show you the exact results from six head-to-head tests, the prompt workflow I'm using, and why the Thinking mode update is the single biggest image-gen leap since DALL·E dropped.

ChatGPT Image 2 Tutorial: The Scores That Made Me Cancel My Design Budget

Let's start with cold numbers.

ELO scores across the leading image models right now:

- ChatGPT Image 2: 1512

- GPT Image 1.5: 1241

- Gemini (Nano Banana 2): 1271

- Grok Imagine: 1170

If you don't follow ELO, a 240-point gap is enormous.

In chess terms, it's like a club player versus a grandmaster.

And the best part?

It's in the free tier of ChatGPT.

Start Here: What You Need For The ChatGPT Image 2 Tutorial

You need one of these:

- A free ChatGPT account (gets the base model)

- A Plus, Pro, or Business account (gets Thinking mode)

- An OpenAI API key via platform.openai.com (for devs)

Image generation works on:

- ChatGPT web

- iOS app

- Android app

- API (model name:

gpt-image-2)

That's it. No extension. No separate subscription. No token juggling.

Thinking Mode — Why This Changes Everything

Every previous image model was basically a souped-up autocomplete.

You type "cyberpunk cat" and it predicts pixels.

ChatGPT Image 2 with Thinking mode doesn't work like that.

Before it draws anything, it:

- Reads your prompt carefully

- Plans the composition

- Considers details you forgot to mention

- Sketches the image internally

- Then generates the final output

Think of it like hiring an art director who asks all the right questions before opening Photoshop.

The reasoning layer is what gives this model its 1512 ELO.

🔥 The exact prompts behind every test in this post Inside the AI Profit Boardroom I've put the full prompt library up — movie posters, comics, maps, logos, mockups — all copy-paste. Plus weekly coaching calls where you can drop your own prompt in and I'll tune it on the call. 3,000+ members inside already. → Grab the prompt library + training

The Six Tests I Ran Live In This ChatGPT Image 2 Tutorial

Every test: same prompt, ChatGPT Image 2 vs Gemini Nano Banana 2.

1. Hyperrealistic movie poster — "The Last Noodle"

ChatGPT Image 2 nailed:

- The tagline text (legible, well-placed)

- Character detail

- Lighting and mood

- Composition

Gemini's poster looked like a student project.

Not close.

2. 8-panel comic about a goldfish

Comics are the hardest test for image models because you need consistency across panels AND readable dialogue.

ChatGPT Image 2: richer colours, better dialogue, tighter panels.

Gemini: muddy, inconsistent character, dialogue half-garbled.

3. Logo for Goldie Agency

This was the closest test.

Gemini's logo was actually decent.

ChatGPT Image 2's was slightly cleaner but not a landslide.

If you're just making quick logos, either tool works.

4. Fantasy world map

This is where most models die horribly.

You need:

- Continents

- Oceans with proper coastlines

- Place name labels (legible)

- Terrain features

- A coherent overall style

ChatGPT Image 2 handled it like it was routine.

Every other model I've tested on maps has produced something that looks like melted cheese.

5. LinkedIn profile for a dog

I built a fake LinkedIn for "Biscuit the emotional support specialist."

The result was funny AND realistic.

Every visual element — avatar, banner, profile styling — matched real LinkedIn.

6. Book mockup in a cafe scene

I uploaded a book cover and asked it to place the book on a cafe table.

Perfect lighting match.

Shadows correct.

Perspective correct.

Looked like a product photographer staged it.

The Claude Sonnet 4.6 Prompt Trick

Here's the trick nobody's sharing.

Don't write your image prompts yourself.

Write them with Claude Sonnet 4.6.

Here's why:

Claude Sonnet 4.6 is absurdly good at breaking a 1-line idea into a structured 300-word image prompt.

Composition. Lighting. Style. Mood. Subject. Colour palette. Camera angle.

All spelled out.

When you drop a Claude-generated prompt into ChatGPT Image 2, the reasoning layer has everything it needs.

The results go from good to stupid good.

I covered my full Claude workflow in the Claude Opus 4.7 for AI SEO breakdown — same principle applies here.

Editing Images — The Underrated Feature

ChatGPT Image 2 isn't just a generator.

It's an editor.

You can:

- Upload any image

- Select a specific area (like a mask)

- Prompt only that area

- Add elements without regenerating the whole image

I added a volcano to my fantasy map with a two-word prompt.

Seamless.

You can also auto-detect intent — the model figures out if you want an edit vs a new image from your wording.

Pairing It With Codex 2.0 For UI

Bonus stack I've been running:

- Codex 2.0 generates UI mockups for landing pages

- ChatGPT Image 2 generates the hero images, product shots, and social creative

- Claude Sonnet 4.6 writes the copy

I'm genuinely finding that Codex mockups look better than the pages I actually code from them.

That's a weird sentence to type.

But it's true.

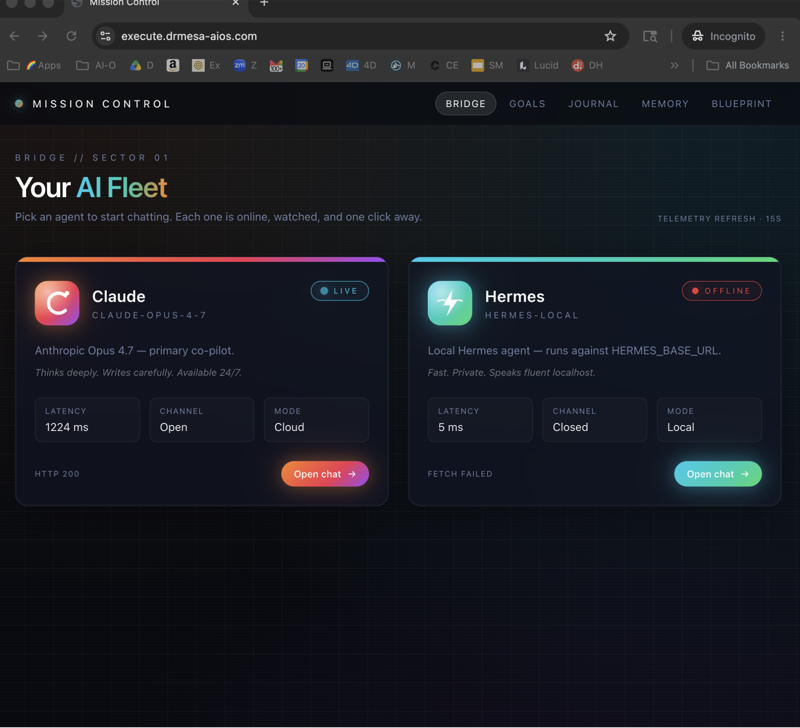

API Setup For Builders

If you want to wire ChatGPT Image 2 into your own stack:

- Go to platform.openai.com

- Generate an API key

- Model ID is

gpt-image-2

From there:

- Hermes: tools → reconfigure → vision, paste key

- OpenClaw 4.21: paste the API docs link + key in the integrations panel

- Custom app: use the OpenAI SDK, call

images.generate

I walked through the Hermes vision pipeline in my Hermes AI Video Generator post — same auth flow for images.

Video notes + links to the tools 👉 https://www.skool.com/ai-profit-lab-7462/about

Speed + Specs

- Generation time: ~43 seconds typical

- Aspect ratios: square, landscape, story (9:16), ultra-wide

- Aspect picker: top right of the chat

- Edit mode: yes

- Selection/mask editing: yes

- Auto-intent detection: yes (generate vs edit)

Where I'm Using It Daily

- Blog post hero images (this one included)

- YouTube thumbnails

- Skool community banners

- Ad creative for the Boardroom

- Book and course mockups

- Fake document mockups for tutorials

- Comic explainers for complex topics

Basically my entire creative pipeline now routes through ChatGPT Image 2.

Stop guessing at prompts — get the templates Inside the AI Profit Boardroom you get my full prompt library for ChatGPT Image 2 plus weekly coaching where we tune prompts on screen. 3,000+ members already using this. No fluff — just working prompts. → Join the Boardroom

Related Reading

- GPT-5.5 Pro — the text model that pairs with Image 2

- ChatGPT Workspace Agents — running ChatGPT as a connected agent

- OpenMythos — creative storytelling stack

- Hermes AI Video Generator — next-step video pipeline

Learn how I make these videos 👉 https://aiprofitboardroom.com/

FAQ

Does ChatGPT Image 2 cost extra?

No. It's included in the free tier of ChatGPT. Thinking mode is Plus/Pro/Business only.

What's the generation speed?

Around 43 seconds per image in my tests.

Can it render readable text in images?

Yes — this is the first model I've used where posters, newspapers, receipts, and comic dialogue are consistently legible.

How does ChatGPT Image 2 compare to Midjourney?

No official ELO for Midjourney in OpenAI's chart, but based on my hands-on tests ChatGPT Image 2 is ahead on text rendering, composition control, and editing. Midjourney still has a stylistic edge in pure painterly renders.

Can I use ChatGPT Image 2 commercially?

Per OpenAI's terms, yes. Always check the latest terms for your specific use case.

What's the best way to write prompts for ChatGPT Image 2?

Use Claude Sonnet 4.6 to expand a 1-sentence idea into a 300-word structured prompt. Drop that into ChatGPT. Massive quality lift.

Can I use ChatGPT Image 2 via API?

Yes. Model ID gpt-image-2 at platform.openai.com. Works with Hermes, OpenClaw 4.21, and any custom build.

Get a FREE AI Course + Community + 1,000 AI Agents 👉 https://www.skool.com/ai-seo-with-julian-goldie-1553/about

That's the playbook — now go run your own chatgpt image 2 tutorial on something you've been paying a designer to make.