OpenClaw Opus 4.7 is now live inside OpenClaw 4.15 — and I've spent the last few days testing every single change.

Here's my honest take.

This update doesn't have one big flashy feature.

What it has is a dozen things that were quietly annoying, quietly broken, or quietly slowing you down — and they're all fixed now.

That might not sound exciting.

But when your AI agent sessions stop breaking randomly, when your memory files stop getting cluttered with junk, when your local models stop choking on oversized prompts — that's when you actually start trusting the tool enough to let it run your business.

And trust is everything with AI automation.

So let me walk you through what changed, what I'm doing with it, and what you should set up this week.

Video notes + links to the tools 👉

Opus 4.7: The Brain Upgrade Your AI Agent Needed

Let's start with the biggest change.

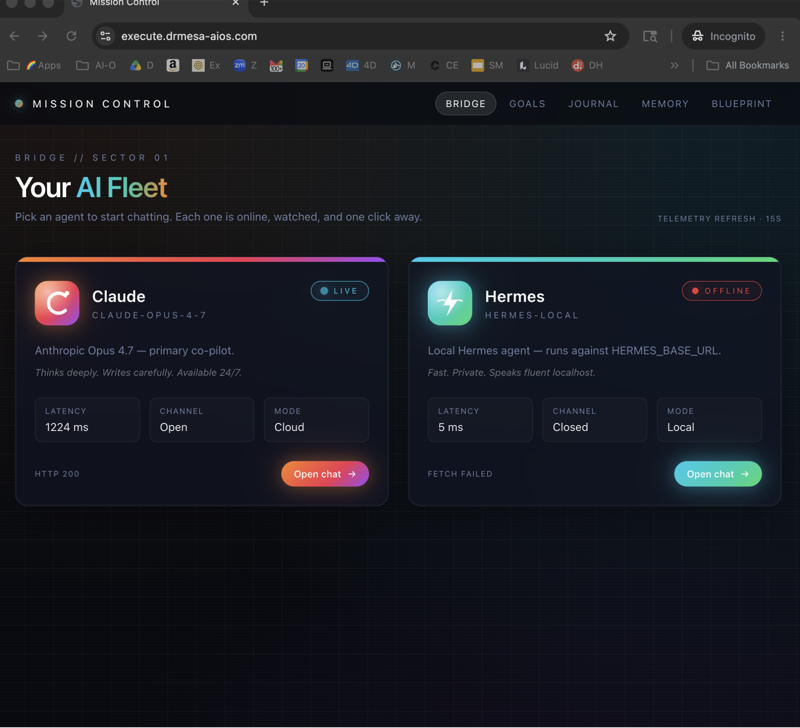

OpenClaw now supports Anthropic's Opus 4.7 — which is, as of right now, the most capable AI model on the planet.

I'm not being dramatic.

Better reasoning.

Better instruction following.

Better at holding complex, multi-step tasks together over long sessions without drifting off or forgetting what it's doing.

If you've ever had OpenClaw start strong on a task and then slowly lose the plot halfway through a long workflow — that's a context handling problem.

Opus 4.7 is significantly better at this.

The Image Understanding Bonus

Opus 4.7 also comes with bundled image understanding.

That means if your OpenClaw workflows involve reading screenshots, analysing images, or pulling data from visual content — it just works now.

No extra configuration.

No plugins.

No add-ons.

Select Opus 4.7 as your model and image processing is built in.

I've been using this for processing client screenshots and pulling structured data from visual reports.

Works brilliantly.

How I Switched (Took About 30 Seconds)

- Updated OpenClaw to 4.15

- Went to provider settings

- Selected Opus 4.7 under Anthropic

- Done

Every workflow I was already running now uses Opus 4.7.

Same prompts, same automations — just running on a smarter engine.

OpenClaw Opus 4.7 Memory Overhaul (Two Big Changes)

Memory is one of OpenClaw's most underrated features.

It's the reason OpenClaw doesn't forget everything when you close a session.

It remembers your business, your clients, your preferences, your workflows.

In 4.15, two things changed that make this dramatically better.

Change 1: Cloud Memory Storage

Previously, your memory index lived on your local machine and nowhere else.

If you switched laptops — gone.

If you were running automations on a remote server — it couldn't access your memory.

Now your memory index can live in cloud storage via LanceDB.

Your agent's accumulated knowledge about your business travels with you.

I've set this up so my laptop and my server automations share the same memory.

The agent running at 2am on my server knows everything the agent on my laptop learned yesterday.

That's a fundamentally different level of automation.

Change 2: Bounded Memory Reads

Here's a problem I didn't even fully realise I had until it was fixed.

Long sessions were pulling in enormous chunks of memory all at once.

This bloated the context window.

Slowed down responses.

Sometimes pushed things past the token limit entirely.

4.15 caps how much memory gets loaded in a single read.

The agent gets metadata telling it when there's more available.

It reads in controlled amounts instead of dumping everything.

Sessions are noticeably snappier now.

Especially if you've been using OpenClaw for months and your memory files are substantial.

Get a FREE AI Course + Community + 1,000 AI Agents 👉

The Dreaming Fix (Finally)

Right, this one was driving me mad.

OpenClaw has a feature called "dreaming" where the agent processes and organises what it learned during a session.

Great feature in theory.

The problem?

All that processing output was getting dumped into your daily memory files.

Sleep phases.

REM phases.

Structured processing blocks.

All sitting right next to your actual useful operational memory.

It made the memory files nearly unreadable.

4.15 moves dreaming output into its own folder: memory/dreaming/.

Your daily memory stays clean and readable.

The agent's processing work lives separately.

Genuinely one of those "why wasn't it always like this" fixes.

Local Model Lean Mode (For Ollama and LM Studio Users)

If you're running OpenClaw with local models, pay attention to this one.

There's a new experimental setting: local model lean mode.

When you flip it on, OpenClaw drops the heavyweight default tools — browser, cron, messages — from the agent's toolkit.

This shrinks the system prompt significantly.

Why That Matters

Smaller local models (anything under ~32 billion parameters) choke on massive system prompts.

They get confused by too many tools.

They miss instructions.

They start behaving erratically.

By giving them a trimmed-down toolkit, you trade some capability for a massive gain in consistency.

I tested this with a 7B model through Ollama.

Before lean mode: sessions were hit or miss, the agent kept trying to use tools it couldn't handle.

After lean mode: noticeably more reliable, follows instructions better, stays on task.

If you're using local models as sub-agents or for cost-sensitive workflows, this is worth turning on immediately.

The Ollama Prefix Fix

There was also a specific bug where model IDs with prefixes (like ollama/qwen:4b) were 404-ing against the API.

4.15 strips the prefix so the model ID resolves correctly.

If your Ollama sessions were randomly breaking mid-run, this is probably why.

Fixed now.

The Security Fixes That Actually Matter

I'm not going to list every security patch.

But three of them are worth knowing about because they directly affect your experience.

1. Tool Loop Guard (Now Default)

If you disabled a plugin and forgot about it, OpenClaw would get stuck in an infinite loop trying to call the missing tool.

It would just keep retrying "tool not found" until the session timed out.

Now there's a guard that kills the loop after about 10 failed attempts.

Small fix.

Saves you from a genuinely maddening failure mode.

2. Secrets Redacted From Approval Prompts

The exec approval screen (where OpenClaw asks permission to run a command) was sometimes showing credential material.

Secrets are now properly redacted.

If you're screen-sharing or recording tutorials (like I do), this matters a lot.

3. Instant Skill Snapshot Updates

When you enable or disable skills in OpenClaw, the changes now take effect immediately.

Previously, the skill snapshot was stale — meaning a disabled skill could still be called in existing sessions.

That's been patched.

When you remove a skill, it's actually removed.

The Auth Dashboard (New)

This is for anyone running multiple AI providers inside OpenClaw.

There's a new model auth status card in the control UI showing:

- What's connected and healthy

- Token expiry warnings — so you know before a token dies mid-session

- Rate limit pressure — see if a provider is approaching limits before it starts throttling you

I'm running Anthropic and a local Ollama setup simultaneously.

Being able to see the health of both at a glance instead of discovering problems when something breaks is a massive quality of life improvement.

Gemini Text-to-Speech (Now Native)

Google's Gemini TTS is now bundled into OpenClaw's Google plugin.

You can:

- Select from multiple voices

- Output WAV audio files

- Handle PCM telephony output

If you're building voice-based automations — customer-facing audio responses, voice reply systems, spoken content — this is now a native option.

No custom integrations.

No third-party APIs.

Just configure and use it.

Learn how I make these videos 👉

My Recommended Setup After the 4.15 Update

Here's exactly what I'd do if I were starting fresh today:

| Setting | What to Do | Why |

|---|---|---|

| AI Model | Switch to Opus 4.7 | Best reasoning + image understanding |

| Memory | Enable cloud storage | Persists across machines |

| Dreaming | Confirm output goes to /dreaming/ | Keeps memory files clean |

| Local models | Turn on lean mode | Way more reliable sessions |

| Auth | Check the new dashboard | Spot token/rate issues early |

Don't try to change everything at once.

Pick two that match your biggest current pain point and implement those this week.

The Bigger Picture

OpenClaw 4.15 has contributions from over 50 community members.

This isn't some closed-source tool built in a vacuum.

It's an active project with thousands of people stress-testing workflows, finding edge cases, and pushing fixes.

When you build your lead generation, your content automation, your client outreach on OpenClaw — you're building on something that's being actively improved by a community that uses it for real business, every day.

The core feature set is strong.

What the team is building now is reliability at scale.

Better memory management.

Better session recovery.

Tighter security.

Cleaner integrations.

That's the sign of a mature platform.

And a platform worth building your business on.

Get Help Setting Up OpenClaw Opus 4.7

If you want step-by-step help with any of this, that's what the AI Profit Boardroom is for.

We've already got members running the new updates.

Inside you get:

- Full tutorials on OpenClaw 4.15 with Opus 4.7 configuration

- Cloud memory setup guides for LanceDB

- Lean mode walkthroughs for Ollama and LM Studio

- A prompt library built specifically around OpenClaw workflows

- Weekly coaching calls where we dig into setups live and answer your specific questions

- 3,000+ business owners sharing what's working

We update the training daily as new releases drop.

The moment something changes, we've got a tutorial on it.

Join the AI Profit Boardroom →

OpenClaw Opus 4.7: Frequently Asked Questions

How do I update OpenClaw to use Opus 4.7?

Update to OpenClaw version 4.15 first. Then go to your provider settings, select Anthropic, and choose Opus 4.7 as your default model. All existing workflows will automatically use the new model. The whole process takes under a minute.

What's the difference between Opus 4.7 and previous Opus models?

Opus 4.7 has meaningfully better reasoning, stronger instruction following, and improved context handling over long sessions. It also bundles image understanding natively — so if your workflows involve processing screenshots or visual content, that's included without any extra setup.

Do I need cloud memory or is local memory fine?

If you only use OpenClaw on a single machine, local memory works fine. Cloud memory matters when you run OpenClaw across multiple computers, on a remote server, or want your agent's knowledge to persist reliably without depending on one machine's local storage. For any serious production setup, cloud memory is the right move.

Will lean mode break my existing workflows?

Lean mode removes heavyweight tools (browser, cron, messages) from the agent's toolkit. If your workflows don't use those tools, nothing breaks. If they do, you'll want to keep lean mode off for those specific workflows. It's most useful for local models under 32B parameters where the full toolkit causes inconsistent behaviour.

Is Opus 4.7 worth the cost compared to cheaper models?

For business-critical workflows — lead generation, client outreach, content that needs to rank — yes, absolutely. The reasoning and instruction-following improvements mean fewer failed attempts, fewer retries, and more reliable output. For simple tasks or high-volume low-stakes work, a cheaper model might still make sense.

What should I set up first after updating?

Switch to Opus 4.7 as your default model — that's the single biggest improvement for the least effort. After that, check whether the dreaming folder separation is active (it should be by default) and turn on lean mode if you're running local models. Those three changes will make the most noticeable difference immediately.

OpenClaw Opus 4.7 running on the 4.15 update is the most reliable version of this tool I've used — and if you're building any kind of AI-powered workflow, this is the version to be on.