Claude Code free via Ollama is the technical sleight-of-hand that saves developers thousands annually — and this guide covers every detail.

The trick: point Claude Code at Ollama instead of Anthropic's API.

Ollama serves free models (cloud and local).

Your Claude Code experience continues.

Cost drops to zero.

Let me walk through the complete technical setup.

Video notes + links to the tools 👉

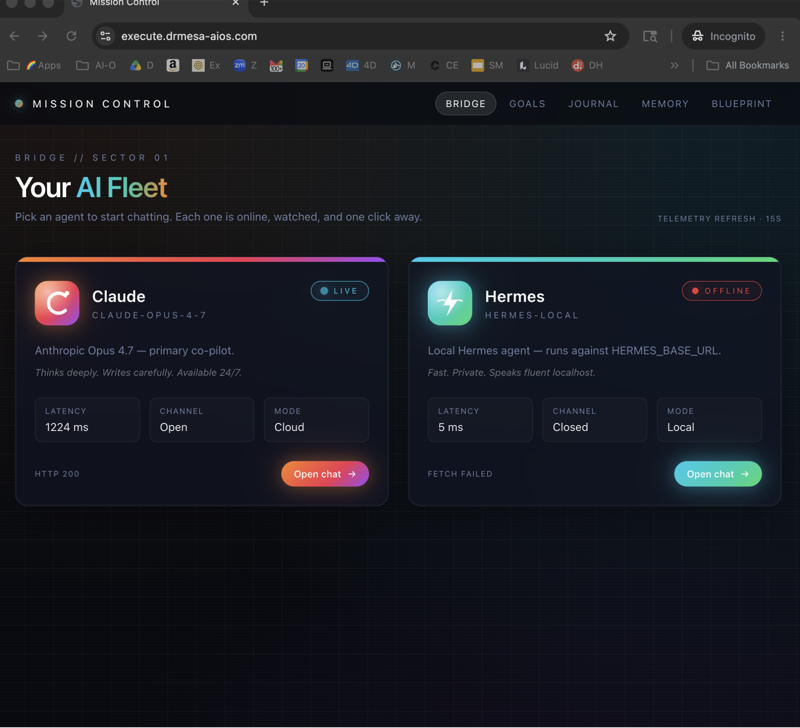

The Claude Code Free Technical Architecture

Traditional Claude Code

Claude Code → Anthropic API → Claude models → Response

Requires active subscription.

Claude Code Free via Ollama

Claude Code → Ollama → Free model (cloud or local) → Response

No subscription needed.

Why This Works

Claude Code supports multiple model providers.

Ollama is a valid provider.

Ollama serves free models.

Math: free.

Model Selection Deep Dive

Cloud Models on Ollama (Free Tier)

GLM 5.1 Cloud

- Fast response times

- Strong coding quality

- Free within usage limits

- My top pick for most users

Qwen 3.5 Cloud

- Highest quality among free options

- Slower than GLM 5.1

- Good for complex tasks

- Also free within limits

Kimmy K2.5 Cloud

- Good general-purpose

- Fast

- Free tier available

Local Models (Always Free)

Gemma 4

- Lightweight (runs on modest hardware)

- Fast

- Good for simple tasks

Qwen 3.5 Local

- Best quality local option

- Needs 32GB+ RAM

- Slower than cloud

Llama 3.3

- Reliable, stable

- Good community support

- Middle-ground performance

Model Selection Framework

Use cloud GLM 5.1 when:

- Speed matters

- Task is standard complexity

- Within usage limits

Use cloud Qwen 3.5 when:

- Quality matters most

- Complex reasoning needed

- Within usage limits

Use local Gemma 4 when:

- Privacy required

- Unlimited usage needed

- Speed matters on limited hardware

Use local Qwen 3.5 when:

- Privacy + quality both matter

- You have 32GB+ RAM

- Can accept slower responses

Step-by-Step Installation

Step 1: Install Ollama

# macOS

curl -fsSL https://ollama.com/install.sh | sh

# Linux

curl -fsSL https://ollama.com/install.sh | sh

# Windows

# Download from ollama.com

Verify installation:

ollama --version

Step 2: Pull Your Chosen Model

# Cloud model (fast, free with limits)

ollama pull glm5.1-cloud

# Or local model (unlimited free)

ollama pull gemma4

Step 3: Launch Claude Code With Ollama

ollama run glm5.1-cloud

This starts Claude Code with the specified model.

Step 4: Verify

Test with a prompt:

Are you working?

Should respond confirming GLM 5.1 Cloud.

Step 5: Use Normally

Continue with standard Claude Code workflows.

Everything works the same — just with a different underlying model.

🔥 Master every aspect of Claude Code Free

Inside the AI Profit Boardroom, I share advanced Claude Code Free configurations — hybrid cloud/local setups, team deployments, performance optimisation. Plus weekly updates on new free models.

Managing Usage Limits

The Reality of Free Tiers

Ollama's free cloud access has limits.

Not unlimited.

Generous for individuals.

Tight for teams at scale.

Staying Within Limits

- Monitor your usage

- Use local models for high-volume tasks

- Reserve cloud for quality-sensitive work

- Switch between cloud/local as needed

When to Upgrade

If consistently hitting limits:

- Consider paid Ollama tier (still cheaper than Anthropic)

- Deploy local models on powerful hardware

- Review workflow efficiency

Beyond Free

Ollama paid tiers: significantly cheaper than Anthropic direct.

Still meaningful savings vs paid Claude Code subscription.

Performance Optimisation

For Cloud Models

- Keep prompts concise

- Use appropriate context length

- Cache common queries when possible

For Local Models

- Use quantised versions (Q4 for speed)

- Load models into RAM

- SSD for fast model loading

- Sufficient RAM to avoid swapping

Hardware Recommendations

Minimum: 16GB RAM, SSD, modern CPU Recommended: 32GB+ RAM, Apple Silicon or dedicated GPU Optimal: M4 Max Mac or equivalent workstation

Comparison: Paid vs Free Claude Code

Quality Gap

Paid Claude (Opus 4.7): 100% quality baseline.

Free via GLM 5.1 Cloud: ~85-90% of paid quality.

Free via Qwen 3.5: ~85-92% quality.

Free via Gemma 4: ~65-75% quality.

Speed Gap

Paid Claude: fastest by wide margin.

Free cloud: 2-3x slower than paid.

Free local: 5-10x slower than paid.

Feature Gap

Most Claude Code features work with free models.

Some features tied to Anthropic API may not.

Value Proposition

For 90% of tasks, free works perfectly well.

For elite-quality work, paid justifies itself.

Most users: free + occasional paid = ideal mix.

Learn how I make these videos 👉

Security and Privacy

Cloud Model Considerations

Your code sent to Ollama's cloud.

Ollama's privacy policy applies.

Local Model Privacy

Nothing leaves your machine.

Best for sensitive projects.

Hybrid Approach

Public code → cloud models (speed).

Private code → local models (privacy).

Enterprise Considerations

For enterprise use, local models often preferable.

Compliance simpler.

No data residency concerns.

See my Claude Code Local deep dive for the offline-only approach.

Integration Patterns

Claude Code + VS Code

Configure Claude Code extension to use Ollama endpoint.

Standard development workflow preserved.

Claude Code + Automation

Pair with Hermes or OpenClaw for agentic workflows.

All using free models via Ollama.

Claude Code + SEO Content

See my Claude Code AI SEO setup for content automation that runs essentially free via Ollama.

Scaling Beyond Individual Use

For Teams

Deploy Ollama on shared server.

Team members connect via network.

Central management, individual productivity.

For Agencies

Dedicated Ollama instances per project.

Client data isolated.

Cost-effective AI-powered service delivery.

For Organisations

Enterprise Ollama deployment.

Compliance-friendly local inference.

Scalable to hundreds of developers.

🔥 Scale Claude Code Free to your team or organisation

Inside the AI Profit Boardroom, I share scaling patterns for Claude Code Free — team deployments, agency setups, enterprise patterns. Plus specific configurations for various hardware and use cases.

Claude Code Free Technical: Frequently Asked Questions

Is this officially supported?

Ollama is officially supported as a model provider by Claude Code. This setup is legitimate.

Will this work with future Claude Code updates?

Backward compatibility typically preserved. Minor adjustments might be needed occasionally.

Can I contribute to Ollama or Claude Code?

Both open-source. Contributions welcomed.

How secure is this setup?

As secure as your chosen model. Local models = most secure. Cloud models = standard cloud security.

Does Anthropic approve of this?

They don't forbid it. You're simply using Claude Code with a different model provider.

What happens when GLM 5.1 moves out of free tier?

Switch to another free model. Options will likely always exist.

Related Reading

- Claude Code Local: Local-only deep dive

- Ollama + Hermes: Ollama ecosystem intro

- Claude Code AI SEO: Content automation

- Hermes Agent Workspace: Paired tool

- OpenClaw Byterover: Alternative ecosystem

Claude Code free via Ollama is the technical setup that makes professional-grade AI coding accessible to everyone — and for developers serious about productivity without subscription bloat, Claude Code free is the setup you need.