Claude Code Local is genuinely one of the most exciting open-source projects of 2026 — and for anyone who does real coding work, it's worth paying attention to.

Because here's what it does:

- Runs Claude Code with local models instead of Anthropic's API

- Works completely offline

- Costs nothing after initial setup

- Supports Gemma, Qwen, Llama, and other Ollama models

For developers tired of subscription costs and rate limits, this changes the economics.

Let me walk through the technical setup properly.

Video notes + links to the tools 👉

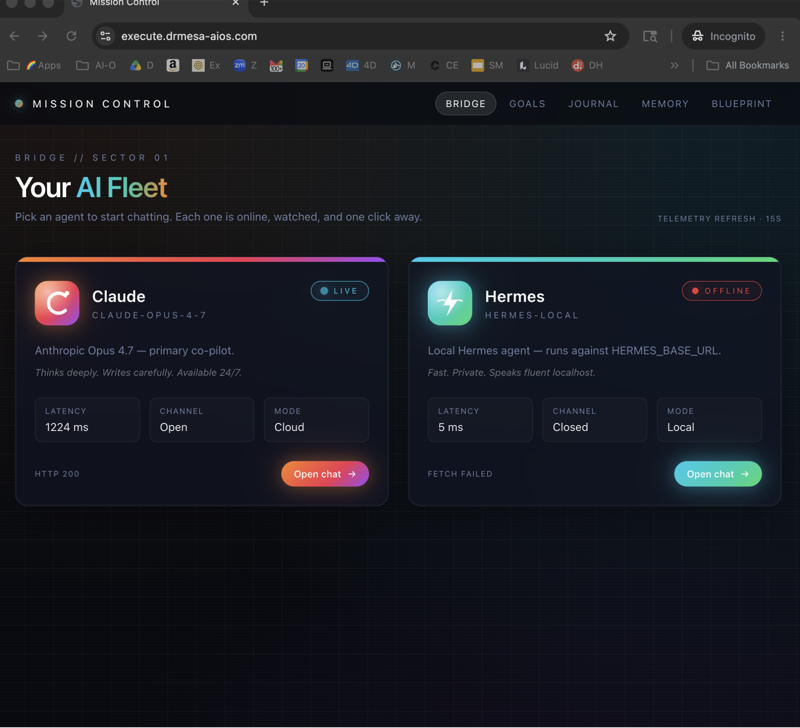

The Claude Code Local Architecture

Claude Code Local replaces the cloud API layer with local inference.

Traditional Claude Code

Your machine → Anthropic API → Claude model → Response

Claude Code Local

Your machine → Ollama → Local model → Response

Everything stays on your hardware.

No network calls.

No API keys.

No rate limits.

Why This Works Now

Two things converged to make this viable:

1. Local Models Got Really Good

Gemma 4, Qwen 3.5, and Llama 3.3 are genuinely capable.

They're not as good as Opus 4.7, but they're good enough for 80% of coding tasks.

2. Ollama Made Deployment Trivial

Installing and running local models used to be painful.

Ollama abstracts all the complexity.

One command downloads, configures, and serves models.

Together, these make Claude Code Local practical.

Claude Code Local Detailed Setup Process

Step 1: Install Ollama

# macOS

curl -fsSL https://ollama.com/install.sh | sh

# Linux

curl -fsSL https://ollama.com/install.sh | sh

# Windows

# Download installer from ollama.com

Step 2: Pull Your Models

# Best balance of quality/speed

ollama pull qwen3.5

# Faster but less capable

ollama pull gemma4

# Well-tested fallback

ollama pull llama3.3

Step 3: Install Claude Code Local

Copy the installation commands from the GitHub repo.

Paste into your Claude Code instance:

Install Claude Code Local for me with Qwen 3.5 as the default model.

Claude handles the rest.

Step 4: Verify It Works

claude-code-local "Are you working?"

Should respond.

Confirm model is correct:

claude-code-local --model qwen3.5 "hello"

Model Comparison (Detailed)

Qwen 3.5

Strengths:

- Best output quality among local options

- Excellent code completion

- Good long-context handling

- Strong across programming languages

Weaknesses:

- Larger memory footprint

- Slower than Gemma

- Needs 32GB+ RAM for best performance

Gemma 4

Strengths:

- Very fast

- Low memory requirements

- Works on modest hardware

- Good for quick tasks

Weaknesses:

- Lower quality than Qwen

- Struggles with complex reasoning

- Limited context retention

Llama 3.3

Strengths:

- Well-tested and stable

- Good general-purpose

- Strong community support

- Predictable performance

Weaknesses:

- Not best at anything specific

- Older architecture

- Moderate quality/speed ratio

My Ollama + Hermes guide has more detail on Ollama model selection.

🔥 Get optimal Claude Code Local performance for your hardware

Inside the AI Profit Boardroom, I share hardware-specific configurations — M1/M2/M3/M4 Macs, various GPUs, modest hardware setups. Model selection matrix based on your specs. 3,000+ members running optimised setups.

Switching Models Dynamically

One of Claude Code Local's best features: hot-swapping models.

Use fast model for simple tasks:

claude-code-local --model gemma4 "add a comment to this function"

Switch to high-quality for complex work:

claude-code-local --model qwen3.5 "refactor this service to use dependency injection"

Same Claude Code workflow.

Different underlying model.

Switch as needed.

Offline Use Cases

Travel Work

Flight with no internet.

Full Claude Code functionality preserved.

Confidential Client Projects

Client data never leaves your machine.

Perfect for regulated industries.

Hobby Projects

No API costs for exploratory work.

Unlimited iteration.

Teaching and Workshops

Deploy for students without worrying about API keys/quotas.

Performance Benchmarks

Rough numbers on my M4 Max:

Simple Tasks

- Cloud Claude: 2-5 seconds

- Qwen 3.5 Local: 5-15 seconds

- Gemma 4 Local: 2-8 seconds

Complex Tasks

- Cloud Claude: 10-30 seconds

- Qwen 3.5 Local: 30-90 seconds

- Gemma 4 Local: 60-180 seconds

Local is slower but acceptable for most workflows.

Cost savings justify the time trade-off for high-volume users.

Learn how I make these videos 👉

Security and Privacy

What Claude Code Local Protects

- Code contents

- Proprietary business logic

- Client data in your codebase

- API secrets

- Customer data

What It Doesn't Protect

- Model updates (need internet)

- Initial Ollama installation

- Code pushed to remote repos (that's your git client, not Claude Code)

For most privacy-conscious use, this is dramatically better than cloud Claude Code.

Integration With Existing Workflows

VS Code

Set up Claude Code Local as a task or terminal integration.

JetBrains IDEs

Shell command configurations work well.

Command Line

Native to the CLI experience.

Git Hooks

Pre-commit hooks using local models are fast enough.

No impact on development velocity.

For non-coding AI work, see my Claude Code AI SEO setup.

🔥 Deploy Claude Code Local for team development

Inside the AI Profit Boardroom, I cover team deployments of Claude Code Local — shared model instances, distributed inference, cost allocation. Scale from solo use to team workflows.

Claude Code Local: Frequently Asked Questions

How much faster is cloud Claude than local?

Typically 3-5x faster depending on your hardware and the task complexity.

Can local models handle long contexts?

Qwen 3.5 handles 128K+ context well. Other models more limited.

Which local model is best for Python?

Qwen 3.5 slightly edges Llama for Python specifically.

Which for web development?

All three work well. Qwen 3.5 has an edge on modern frameworks.

Can I use Claude Code Local commercially?

Yes, open-source licence permits commercial use.

Will Anthropic's Claude Code work alongside Claude Code Local?

Yes, non-conflicting installations.

Related Reading

- Ollama + Hermes: Foundation knowledge

- Claude Code AI SEO: Cloud Claude Code workflow

- Claude Opus 4.7 AI SEO: Cloud model comparison

- Hermes VS OpenClaw: Full agent comparison

Claude Code Local brings the Claude Code experience to free, offline, private local inference — and for anyone serious about AI-powered development in 2026, Claude Code Local deserves a place in your toolkit.