DeepSeek V4 Ollama is the install I've been recommending to every single person in my community this week — and it takes about four minutes start to finish.

I'll walk you through it command by command.

If you've ever tried to install a big open-source model locally, you know the drill.

Download 40GB of weights. Wait an hour. Discover your machine can't actually run it. Cry a little. Uninstall.

This guide is the opposite of that experience.

Why DeepSeek V4 Ollama Skips All the Pain

Normal Ollama installs need decent hardware — 16GB RAM minimum for anything respectable, ideally a GPU.

DeepSeek V4 Flash through Ollama doesn't.

Because it's a cloud model.

The Ollama CLI on your machine just sends API calls to Ollama's hosted version. Inference happens on their servers. You see the response stream back in your terminal.

Net result — a frontier-class model running through your familiar ollama run command, on a five-year-old laptop, for free.

That's why DeepSeek V4 Ollama is the install that actually works for everyone, not just people with £3000 desktops.

Step 1: Get the Latest Ollama

Go to ollama.com.

Copy the install command. On Mac it's something like:

curl -fsSL https://ollama.com/install.sh | sh

Paste into terminal. Hit enter. Wait thirty seconds.

Critical bit — even if you already have Ollama, run this command. The cloud model support is recent. Old builds won't see DeepSeek V4 Flash at all.

I learned that the hard way after twenty minutes of "model not found" errors.

Step 2: Find DeepSeek V4 Flash on the Models Page

Open ollama.com/models in your browser.

Search "deepseek".

You'll see DeepSeek V4 Flash with a little cloud icon next to it. That cloud icon is your signal — it's hosted, not local, no download needed.

Copy the exact model identifier. Don't type it from memory. Naming conventions trip people up.

Step 3: Run It

Back in your terminal:

ollama run deepseek-v4-flash

No download bar appears.

No "extracting layers" log.

A blinking prompt opens and you're chatting with DeepSeek V4.

That's the entire install. Genuinely.

🔥 Want to see this running live with all the harnesses connected? Inside the AI Profit Boardroom I've recorded the full DeepSeek V4 Ollama setup, plus how I wire it into Claude Code, OpenClaw and Hermes. Step-by-step videos, weekly coaching calls, 3,000+ members building the same stack right now. → Get access here

Step 4: Test It With a Real Question

First thing I do with any new model — give it a real task.

I asked DeepSeek V4 to explain why my React component was re-rendering on every state change.

It thought for a beat (in Chinese — that's a quirk you'll see), then dropped a clean English answer with a code fix.

Sharp. Fast. No fluff.

That's the moment you realise this isn't a toy.

Step 5: Open New Terminal Tabs for Parallel Work

Cmd+T on Mac opens a new terminal tab.

Hit it five times.

Now you can run:

- Tab 1 — Claude Code pointed at DeepSeek V4

- Tab 2 — Codex pointed at DeepSeek V4

- Tab 3 — Open Code building a page

- Tab 4 — OpenClaw browser automation

- Tab 5 — Hermes scheduled agents

- Tab 6 — raw Ollama chat for quick questions

All using the same DeepSeek V4 Ollama install. All in parallel.

Wiring DeepSeek V4 Into Claude Code

This is where it gets properly fun.

Claude Code's planning and tool-use loop is brilliant. DeepSeek V4 is a frontier model. Combine them and you get Claude's harness driving DeepSeek's brain.

The setup is just a config tweak — point Claude Code at your local Ollama endpoint and tell it which model to use.

Pairs nicely if you've already followed Claude Code AI SEO — same harness, smarter free brain.

OpenClaw + DeepSeek V4 for Browser Automation

OpenClaw runs as a local web UI on a gateway port.

Once it's wired to DeepSeek V4 Ollama, you can give it browser commands in plain English.

I told it "go speak to ChatGPT" — it opened ChatGPT in my browser and started typing a query, instantly.

No script. No hardcoded automation. Just the model deciding what to click.

This is the kind of magic OpenClaw with Opus 4.7 was doing on paid models a few months ago. Now it's free.

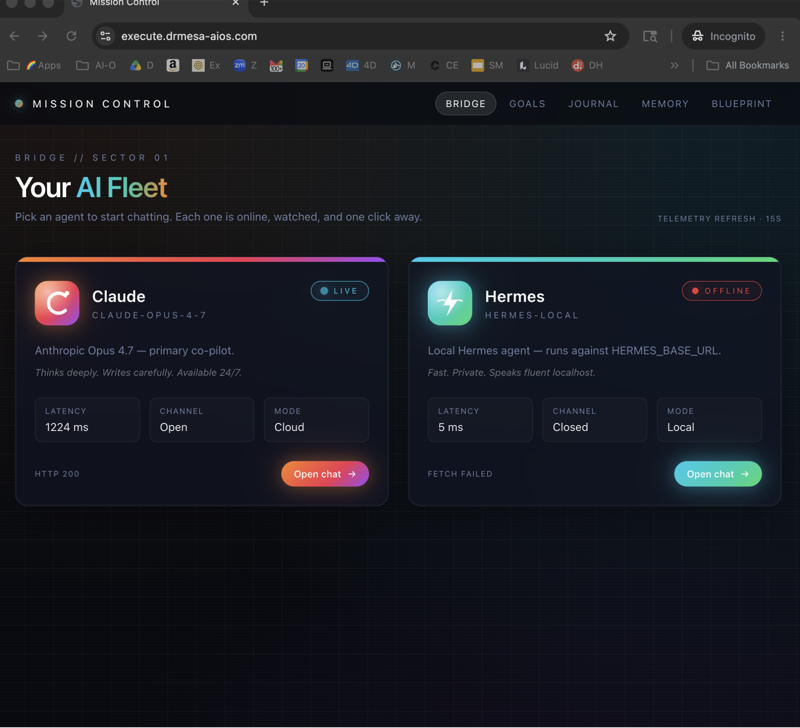

Hermes for the Scheduled Stuff

Hermes is the harness for "fire and forget" agents.

I have one running daily that researches AI automation news and pings me a summary by 9am.

Setup time: under a minute.

Running cost: zero.

If you've been following the Hermes vs OpenClaw breakdown, this is the moment to actually flip the switch and build with it.

What DeepSeek V4 Ollama Won't Do

Be honest about the limits.

Raw DeepSeek V4 in your terminal can't:

- Browse the web

- Run shell commands

- Schedule itself

- Use tools

For all of that, you need a harness — that's what Claude Code, OpenClaw and Hermes are for.

The DeepSeek V4 Ollama install is the brain. The harnesses are the hands.

Don't expect the brain to do hand-work.

The Free Stack I Now Run Daily

After two weeks of testing, this is my full stack:

- DeepSeek V4 Flash via Ollama — the model

- Claude Code — coding sessions

- Codex — quick coding builds (made me a ping pong game in five minutes)

- Open Code — page building

- OpenClaw — browser automation

- Hermes — scheduled research and outreach

Total cost: £0/month.

Total capability: more than I had with £200/month subscriptions last year.

Want my exact configs for every harness? Inside the AI Profit Boardroom I've shared the working configs for Claude Code, OpenClaw and Hermes — all pointed at DeepSeek V4 Ollama. Plus weekly calls where you can share screen and I'll debug yours live. → Join the Boardroom

Troubleshooting Your DeepSeek V4 Ollama Install

If something goes sideways, here's the order to check:

- Ollama version —

ollama --version, must be recent - Model name spelling — copy exact from ollama.com/models

- Internet connection — cloud model, needs network

- Free tier limits — if you've hammered it, you might be rate-limited

- Firewall blocking the Ollama daemon — rare but happens on locked-down work machines

Ninety percent of issues are point 1 or 2.

Related reading

- DeepSeek V4 Tutorial — the model deep-dive if you want background

- Claude Code AI SEO — the harness primer that pairs with V4 perfectly

- Hermes vs OpenClaw — pick the right harness for your DeepSeek V4 use case

FAQ

How long does the DeepSeek V4 Ollama install take?

About four minutes if your internet is decent. There's no model download — that's the whole trick. You're just installing the Ollama CLI and pointing it at the cloud model.

What's the difference between DeepSeek V4 Flash and the regular V4?

Flash is the version Ollama's hosting in the cloud free tier. Smaller, faster, optimised for low-latency responses. Plenty good enough for 95% of agent work.

Can I run DeepSeek V4 Ollama on Windows?

Yes — Ollama has a Windows installer on ollama.com. Same workflow, same model name.

Does DeepSeek V4 Ollama work offline?

No. It's a cloud model — it needs internet to reach Ollama's servers. If you want offline, you need a local model like Llama or local DeepSeek variants.

Why is DeepSeek V4 Ollama free when other frontier models cost £20/month?

Ollama's free tier is subsidised right now to drive adoption of their cloud-model platform. Whether that lasts forever is anyone's guess — I'm using it heavily while it's here.

Can I use DeepSeek V4 Ollama for client work?

Check the terms on ollama.com — currently the free tier is fine for commercial use within rate limits. For high-volume production, look at the paid tier.

Get a FREE AI Course + Community + 1,000 AI Agents 👉 Join here

Video notes + links to the tools 👉 Boardroom

Learn how I make these videos 👉 aiprofitboardroom.com

The DeepSeek V4 Ollama install is the lowest-friction way to put a frontier model on your machine — get it running today and you'll wonder why you ever paid for the alternatives.