Hermes Gemma 4 is the setup I'd recommend to anyone who's tired of paying stupid money for API tokens.

Here's the honest truth.

Most people waste $200 a month on AI APIs because nobody told them the local stuff finally works.

Gemma 4 + Hermes changes the game.

You can run an actual agent on your machine, for free, forever, with a bigger context window than MiniMax M2.7.

That's not hype. That's maths.

Let me show you how it all clicks together.

What Hermes Gemma 4 Actually Is

Two pieces.

Gemma 4 — Google's latest open-source lightweight efficient model. It dropped with a few variants, the biggest pushing 256K context.

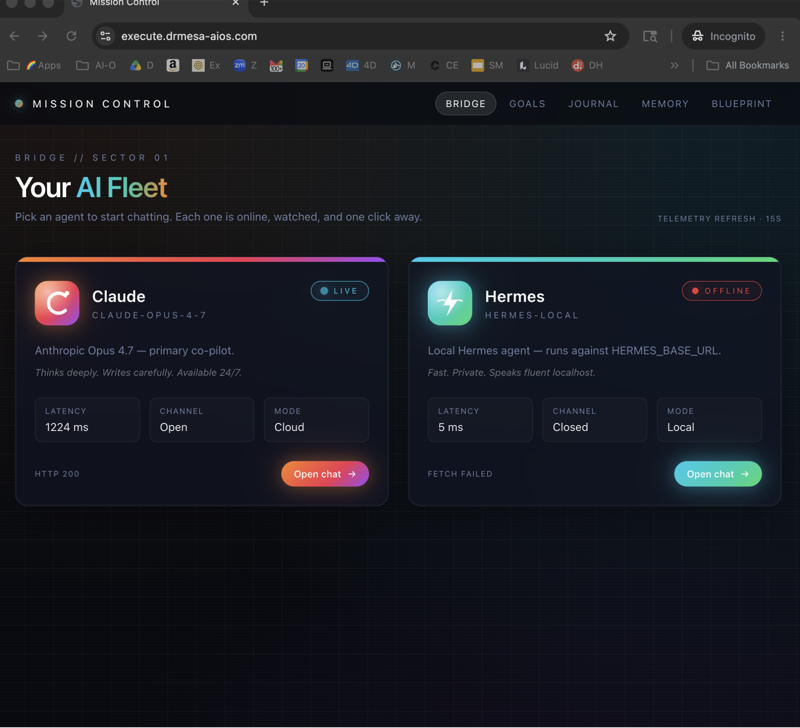

Hermes — the AI agent I've been running for about six months now. It orchestrates tasks, calls tools, spawns sub-agents, writes code.

Put them together and you've got a self-hosted agent that runs locally, for free, with no rate limits and no monthly bill.

That's Hermes Gemma 4.

If you're new to Hermes itself, I'd go read my Hermes agent workspace breakdown first to get the lay of the land.

Then come back here for the Gemma 4 part.

Why I Actually Care About This

I run a lot of agents.

Some days I'll have 30+ Hermes sessions open across different machines.

If every one of those sessions was burning Claude Opus tokens, I'd be broke.

So the real trick isn't "which single model is best" — it's "which stack of models is best".

For the heavy lifting, I use Claude Opus 4.7. I covered that in Claude Opus 4.7 for AI SEO.

For the 10x sub-agents underneath — the ones just grinding through batches of work — I use Hermes Gemma 4.

Because it's free.

And it's good enough.

That combination is what makes running an AI business sustainable.

The Setup In 5 Steps

1. Install Ollama

Go to ollama.com.

Copy the install command they show you.

Paste it into your terminal.

Hit enter.

That's it.

Ollama now runs quietly in the background on your machine. It's the thing that hosts Gemma 4 locally and exposes it over a URL.

2. Pull Gemma 4

On ollama.com, go to the Models page.

Click "Gemma 4".

You'll see a few variants listed:

- Smaller Gemma 4 variants — around 128K context. Runs on nearly anything.

- Larger Gemma 4 variants (18GB, 20GB) — 256K context. This is the one bigger than MiniMax M2.7's context window.

Pick one.

Ollama gives you a terminal command to install it. Something like ollama pull gemma:4-latest.

Run it.

Wait for the download.

Done.

3. Fire Up Hermes

Open a new Hermes chat in your terminal.

Type:

hermes model

A list of available endpoints appears.

You'll see the usual suspects — cloud providers, built-in options — and right near the bottom, "Custom Endpoint".

Select that.

4. Point Hermes At Ollama

Hermes now asks for a URL.

This is where you paste the Ollama local URL.

Ollama runs on http://localhost:11434 by default.

Paste that in.

Critical: Ollama has to be running in the background for this to work. If you've just rebooted, open a second terminal and run ollama serve or just ollama list to wake it up.

Next, Hermes asks for an API key.

Two options, both work:

- Leave it blank

- Type "Ollama"

Either one. Honestly doesn't matter. I usually type "Ollama" because I like seeing it there.

Hit enter.

5. Pick Gemma 4 And Run

Hermes now shows you the models it found through Ollama.

You'll see Gemma 4 latest (plus any other models you've pulled).

Select Gemma 4 latest.

Leave the next prompt blank.

Run Hermes.

Boom.

You're now running Hermes Gemma 4 locally. Free. Forever.

🔥 Want to skip the trial and error? Inside the AI Profit Boardroom, I've got a full Ollama + Hermes section with step-by-step video tutorials showing the exact clicks and commands. Daily trainings in the SOP section show you real workflows — like yesterday's run-through of the new Hermes 0.7 update. Plus weekly coaching calls where you can share your screen and get help with YOUR setup. 3,000+ members already inside. → Join the Boardroom here

The Context Window Thing Is Bigger Than People Realise

Here's a thing that doesn't get enough airtime.

The bigger Gemma 4 variants — the 18GB and 20GB versions — run with a 256K context window.

Let me say that again.

256K.

Local.

Free.

That's bigger than MiniMax M2.7.

Bigger than a lot of frontier cloud models.

When I realised this, I nearly dropped my coffee.

What does a 256K context window let you do?

- Dump an entire medium-sized codebase into the context and let Hermes reason across the whole thing

- Feed in hours of meeting transcripts and ask for themes

- Give it a full product spec + all competitor research + analytics, and get a coherent strategic answer

- Run a single Hermes sub-agent on a long research task without worrying about the context filling up

All of that, for free, on your own machine.

If you've ever been rate-limited mid-task on a cloud model at exactly the wrong moment, you know how much that matters.

Hermes Gemma 4 vs Hermes Cloud Models

I'm not going to lie to you.

Cloud models are still smarter on the hardest tasks.

If you give Gemma 4 a PhD-level reasoning question, it won't touch Claude Opus or MiniMax M2.7.

But here's the thing — most of what agents do isn't PhD-level reasoning.

It's:

- Summarising documents

- Generating variations

- Filling in templates

- Running searches and pulling facts

- Writing boilerplate code

- Classifying stuff

- Tagging and organising

For all of that, Gemma 4 is plenty.

My rule of thumb:

- Orchestration / hardest reasoning → Claude Opus 4.7 or MiniMax M2.7

- Sub-agents / bulk work / private data → Hermes Gemma 4

Quick Bonus: Running MiniMax M2.7 Through The Same Setup

The custom endpoint trick works for more than Gemma 4.

If you want to run MiniMax M2.7 cloud through Hermes:

hermes model- Select custom endpoint

- Type "2" for MiniMax M2.7 cloud

- Leave API key blank

- Run Hermes

Done.

MiniMax 2.7 is an agentic self-improving model — it's literally built to run tools.

You can even run OpenClaw with MiniMax 2.7 on top of Ollama. I cover that deeper in OpenClaw Byterover and the full OpenClaw AI SEO breakdown.

The point is — once you've got the custom endpoint flow down, you can swap models on a whim.

Hermes is the conductor.

Gemma 4, MiniMax 2.7, Claude Opus — they're the musicians.

Common Gotchas I've Hit With Hermes Gemma 4

A few headaches worth flagging:

Ollama not running. The single most common issue. Hermes tries to connect to the URL, gets no reply, errors out. Fix: run ollama list in a second terminal. If it hangs or errors, restart Ollama.

Wrong URL. Make sure you're pasting the local Ollama URL (usually http://localhost:11434), not some weird variation. Hermes is picky.

Model not listed. If Gemma 4 doesn't appear in the Hermes model list, you probably didn't pull it properly. Run the install command again from ollama.com's Gemma 4 page.

Machine too slow. If you picked a 20GB variant on a laptop with 8GB RAM, it'll crawl. Drop to a smaller Gemma 4 variant.

Weird outputs. Local models can be a bit less polished than cloud. Prompt them like you're talking to a junior — a bit more specific, a bit more structured.

FAQ — Hermes Gemma 4

What is Hermes Gemma 4?

It's the pairing of the Hermes AI agent with Google's open-source Gemma 4 model, running locally via Ollama. The result is a fully free AI agent that runs on your own machine with no API bills.

How do I install Hermes Gemma 4?

Install Ollama from ollama.com, pull Gemma 4 via the ollama.com Models page, then run hermes model, select custom endpoint, paste the Ollama URL, set the API key to "Ollama" (or leave blank), and select Gemma 4 latest.

Is Hermes Gemma 4 really free forever?

Yes. Gemma 4 and Ollama are open-source, and Hermes doesn't charge to point at a local model. Your only cost is the electricity to run your own machine.

How big is the Gemma 4 context window?

The smaller Gemma 4 variants run at around 128K context. The larger 18GB and 20GB variants run at 256K context — bigger than many frontier cloud models including MiniMax M2.7.

Can Hermes Gemma 4 run tools?

Yes. Gemma 4 is one of the new wave of Ollama-compatible models designed for agentic use. It handles tool calling and works well as a sub-agent inside Hermes.

Does Hermes Gemma 4 replace cloud models?

Not entirely. Cloud models like Claude Opus 4.7 and MiniMax M2.7 are still sharper on the hardest reasoning tasks. Use Hermes Gemma 4 for sub-agents, bulk work, private data, and jobs where free + unlimited matters more than peak intelligence.

Related Reading

- Hermes agent workspace — how the Hermes workspace is laid out

- Ollama + Hermes — the foundation walkthrough for Ollama-powered Hermes

- Claude Opus 4.7 for AI SEO — the cloud-model counterpart

Closing Thoughts

If you've been sitting on the fence about local AI models, Hermes Gemma 4 is the moment to jump.

256K context.

Free forever.

Easy setup.

Works with sub-agents.

Nobody else on the internet explains this setup as simply as I just did.

Go run it.

🚀 Want to go from tutorial to actual business results? Inside the AI Profit Boardroom, I've got a 2-hour course on exactly how to use Hermes to save time and grow a real AI business. Plus a 6-hour OpenClaw course, daily trainings in the SOP section, weekly coaching calls, and 145 pages of member wins. Map view to connect with people in your local area. The classroom has the best trainings on AI automation anywhere on the internet. → Get access here

Video notes + links to the tools 👉 https://www.skool.com/ai-profit-lab-7462/about

Learn how I make these videos 👉 https://aiprofitboardroom.com/

Get a FREE AI Course + Community + 1,000 AI Agents 👉 https://www.skool.com/ai-seo-with-julian-goldie-1553/about

Run Hermes Gemma 4 today and stop paying for stuff you can get for free.