OpenClaw Kimi K2.6 is the agent stack I've been running all week, and it's the cleanest free setup I've tested in months.

Let me cut straight to it.

No intro fluff.

No "in this article we'll cover…"

You want the setup.

Here's the setup.

OpenClaw Kimi K2.6: The 30-Second Version

One-click install Ollama.

Pick Kimi K2.6 in Models.

Run with OpenClaw.

Gateway fires up.

You've got an open-source agentic AI in roughly 5 minutes.

That's the whole story.

Now let me show you the bits that matter.

Why I Chose Kimi K2.6 Over Other Models

I test a lot of models.

Most sound clever but fall flat when you ask them to do something.

Kimi K2.6 is different.

It's an open-source agentic model — designed from day one for agent workflows.

Tool calls.

Multi-step reasoning.

Web research.

That's what it's trained for.

Kimi's own blog post specifically names OpenClaw and Hermes as the two tools it's built to pair with.

When the model's own team tells you "use these two shells," you listen.

The Setup, Step by Step

1. One-Click Install Ollama

Grab the one-click setup command.

Plug it into terminal.

This installs Ollama or updates you to the latest version.

Don't skip the update part.

Older Ollama builds don't ship with the Kimi K2.6 cloud dropdown — you'll stare at the Models page wondering where it went.

2. Find Kimi K2.6 in Models

Go to Models inside Ollama.

Look for Kimi K2.6.

Open-source agentic model, free within token limits.

3. Pull or Run With OpenClaw

Two paths:

- Pull the model into Ollama directly.

- Or run it with OpenClaw directly.

I pick the second one.

Fewer steps.

Same result.

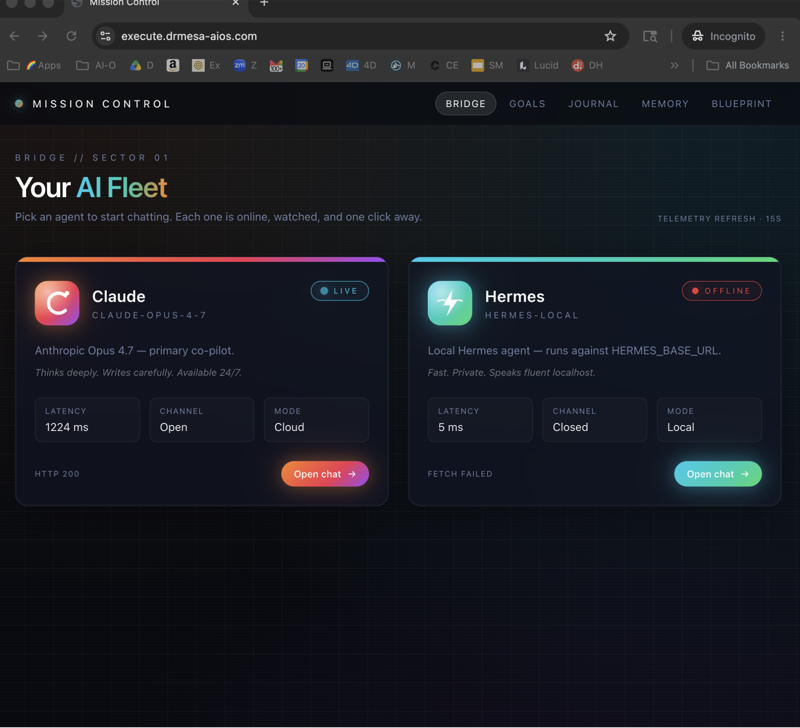

4. Check the Gateway

Running the model starts the gateway.

Open it.

Go to OpenClaw.

Confirm it's running.

Works straight away.

This is the same gateway pattern I walked through in my OpenClaw AI SEO tutorial — if you've done that one, none of this will feel new.

The Real-World Test

I tested OpenClaw Kimi K2.6 with a classic agent prompt.

"Research the web for the latest AI news today."

Response came back fast.

Three fresh news releases.

Clean summary.

Didn't sound like a chatbot reading a script.

Didn't pad it out with "Based on my research…"

Just the news.

I pushed it harder: "Check what happened today in AI automation."

It grabbed the web tool.

Used it properly.

Reported back with current info.

That's what an agentic model is supposed to do.

OpenClaw Kimi K2.6 vs Gemma 4: The Pricing Truth

Here's the bit nobody's telling you clearly.

Kimi K2.6 = cloud model.

Free within token limits.

Heavy daily usage will hit the wall unless you're on Ollama's upgraded plan.

Gemma 4 = local model.

Unlimited usage when running locally.

My rule:

- Use Kimi K2.6 when you need agentic behaviour (tool calls, web research, multi-step tasks).

- Use Gemma 4 when you need volume and don't care about agent features.

Grab Gemma 4 from ollama.com under Models > Gemma 4.

Run it local.

Run it all day.

Nobody's stopping you.

🔥 Want the full OpenClaw playbook? Inside the AI Profit Boardroom I've got a complete 6-hour OpenClaw course plus a 2-hour Hermes course. Every model I've tested — Kimi K2.6, Opus 4.7, Gemma 4 — walked through setup to production. Weekly coaching calls where we debug your builds live. 3,000+ members inside. → Get the full training here

The Hermes Route (Kimi's Second Blessed Shell)

OpenClaw isn't the only option.

Kimi's blog specifically called out Hermes as well.

Hermes recently got Ollama support baked in.

To run Kimi with Hermes:

- Install Hermes with Ollama support.

- Run

launch Hermesinside terminal. - On setup, select Kimi.

Done.

Runs nicely together.

If you're torn between shells, my Hermes vs OpenClaw comparison breaks down which one fits which workflow.

Short answer: OpenClaw for quick agents, Hermes for the full agent workspace vibe.

Atomic Chat: No Terminal, Same Power

Most people aren't technical enough to enjoy terminals.

That's the honest truth.

Terminals make everything harder for 80% of the audience.

Atomic Chat is the fix.

Nice UI.

Huge model selection.

Kimi K2.6 cloud available right there.

Dropdown to swap between models mid-chat.

Same tools under the hood.

Here's the setup path:

- Go to Atomic Chat.

- Click AI Models > API Keys.

- Pick Kimi K2.6 cloud.

- Get your API key from Ollama Settings > Keys.

- In Atomic Chat, select Ollama in the local model section.

- Pick Kimi K2.6 cloud.

- Hit Save.

- Go to Dashboard > Chat.

- Paste the API key from Ollama.

- Save again.

- Select Kimi K2.6 cloud Ollama from the model list.

- Start sending messages.

That's the whole dance.

Same setup.

Zero terminal.

Kimi K2.6 With Other Shells (Quick Notes)

Kimi works with:

- OpenCode — if you prefer a coding-first shell.

- Codex — for coding tasks.

- Claude Code — yes, you can route it there too.

But setup is fastest with OpenClaw or Hermes.

That's not my opinion — that's what Kimi's team published.

What I'd Do If I Was Starting Today

If I was zero hours in on AI agents and wanted to move fast, here's the exact order I'd follow.

- Install Ollama (one-click).

- Install OpenClaw.

- Pull Kimi K2.6.

- Fire a simple research prompt.

- If I love it, follow my OpenClaw 4.20 update guide to squeeze the latest features out of the shell.

- For heavy daily usage, switch to Gemma 4 local for volume work.

That's it.

No courses needed.

No 12 YouTube tabs open.

Just this.

FAQ: OpenClaw Kimi K2.6

How long does OpenClaw Kimi K2.6 take to set up?

About 5 minutes if you've already got Ollama installed.

Roughly 10 minutes fresh from scratch, including the one-click install and the gateway check.

Is OpenClaw Kimi K2.6 actually free?

Yes — within Ollama's cloud token limits.

It's a cloud model, so heavy daily usage needs Ollama's upgraded plan.

For unlimited usage, switch to Gemma 4 local.

Do I need a GPU for Kimi K2.6?

No — Kimi K2.6 is a cloud model, so the compute happens on Ollama's side.

That's the trade-off: no GPU required, but token-limited.

Local models like Gemma 4 need your machine to run them but are unlimited.

Does OpenClaw Kimi K2.6 work on Mac and Windows?

Yes — both OpenClaw and Ollama run on Mac and Windows.

The one-click setup command handles the install on either.

Why use Kimi K2.6 instead of just Claude or GPT?

Kimi K2.6 is open-source and agentic-first.

Free within limits.

No subscription required.

Designed specifically for tool-calling workflows rather than chat.

It's the right pick when you want an agent, not a conversationalist.

Can I use Atomic Chat instead of OpenClaw?

Yes — Atomic Chat gives you the same Kimi K2.6 cloud access through a proper GUI.

You plug your Ollama API key in, pick Kimi K2.6 cloud, and you're chatting with the same model OpenClaw would route to.

No terminal required.

Related Reading

- OpenClaw + Opus 4.7 setup — same OpenClaw scaffolding, premium model.

- Ollama + Hermes guide — the local-first alternative stack.

- OpenClaw 4.20 update breakdown — latest shell features worth knowing.

🎯 Ready to stop watching tutorials and start shipping agents? The AI Profit Boardroom has the full OpenClaw course, the Hermes course, daily classroom tutorials, weekly coaching calls, and the agent map so you can meet people in your city running this same stack. 3,000+ members. Everything you need to win with AI agents. → Join the Boardroom

Video notes + links to the tools 👉 https://www.skool.com/ai-profit-lab-7462/about

Learn how I make these videos 👉 https://aiprofitboardroom.com/

Get a FREE AI Course + Community + 1,000 AI Agents 👉 https://www.skool.com/ai-seo-with-julian-goldie-1553/about

Go set it up — OpenClaw Kimi K2.6 is the fastest free agent stack on the table right now.